Overview

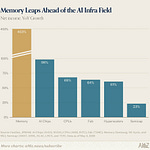

Today’s posts had a clear thread running through them: AI is moving from “helpful tool” to “handles the work”. That showed up in code review at scale, agents that remember your repo, app builders that skip the IDE, and video models that are good enough to confuse what’s real. There was also a side conversation about moats, hype cycles, and the quieter stuff like optimism and health.

The big picture

The pace is not just about smarter models, it’s about systems and workflows that make AI usable in messy, real environments: thousands of PRs, production-grade apps, ads made on a shoestring, and video that muddies proof. The winners look less like “an app with AI” and more like teams (or solo builders) who can ship, validate, and defend something concrete.

PR review at open-source scale, with 50 Codex running in parallel

Peter Steinberger laid out a pragmatic approach to a problem most projects only dream of having: thousands of incoming PRs, duplicates piling up, and no human way to keep pace. The trick is treating each PR like metadata, spinning up dozens of parallel Codex runs to produce structured JSON reports, then rolling that up into one place for triage, clustering, and clean-up.

What’s striking is how “un-glamorous” the solution is. It’s not a magical bot that does everything, it’s a pipeline that turns chaos into something you can sort, close, and merge with confidence.

GitHub Copilot gets memory, and repo context becomes sticky

GitHub is pushing the idea that your coding agents should learn your repo over time, not treat every session like a blank slate. Copilot “memory” across VS Code Insiders, CLI, the coding agent, and code review is the sort of feature that sounds small until you’ve lived through repeating the same conventions and caveats every day.

The obvious question is how teams will manage what gets remembered, what gets forgotten, and how to keep it useful rather than noisy. Still, this is the direction of travel: less prompting, more continuity.

Google AI Studio plus Antigravity points at the next coding UI

TestingCatalog says Google AI Studio is being “powered by Antigravity”, with demos focused on full-stack app building, multiplayer support, polished UI, and secure links to real services. That combo matters because it nudges AI coding away from snippets and towards complete, runnable products.

It also raises the bar for everyone else. If the default expectation becomes “type an idea, get a working app”, then differentiation moves to taste, product judgement, and distribution.

From prompt to native iOS app, including AR, in a single shot

Ernesto Lopez showed a push-up tracker built inside Rork that hooks into native iOS features and even AR. The line that stuck out was the quiet confidence: these apps are starting to look professional by default, not like prototype fodder.

Tools like this do not remove the hard parts of building a business, but they do remove weeks of engineering friction. That changes who gets to test ideas, and how fast copycats show up.

Agents that run the business, not just the tasks

Peter Yang’s episode teaser with Nat Eliason is another data point in the “agent operator” trend: an OpenClaw bot that spun up a site, product, Stripe, and launched, then pulled in $14,718 in three weeks. The detail worth noticing is the memory structure: summaries, daily notes, and nightly consolidation. That’s less sci-fi, more good operations.

This is how “agentic” becomes real: not by talking, but by keeping context and removing bottlenecks without constant supervision.

DeepMind’s Unified Latents tackles a stubborn diffusion weak spot

Robert Youssef flagged DeepMind’s Unified Latents as a clean fix to a messy part of image generation: the latent encoder and the diffusion prior not quite agreeing on what information should be kept. The idea of jointly training them, with a more principled loss, is the sort of foundational work that ends up rippling out into better quality and better control.

It’s also a reminder that not all progress is a new model name. Some of it is making the plumbing less hacky so the whole stack behaves.

AI ad production gets cheap enough to change the job

el.cine’s thread is basically a playbook for recreating the feel of a big-budget advert on pocket change. Whether you love or hate that prospect, the economics are changing. When the spend drops, the constraint becomes creative direction and judgement, not access to a studio.

Expect more content that looks “expensive” and more pressure on brands to stand out through taste rather than gloss.

Real estate goes full social feed, and AI video becomes the default

Justine Moore pointed at real estate as a practical early adopter of AI video. It makes sense: listings already live and die on attention, and the medium is moving from static photos to short, scrollable product-style clips.

There’s a thin line between “helping buyers imagine a space” and “over-selling a fantasy”, so trust and disclosure are going to matter more as these tools become normal.

If the robot video is fake, it still proves something

Chris summed up the weird moment we’re in: a humanoid “making a bed” clip is so convincing that, real or fake, it points to a breakthrough. If it’s real robotics, that’s huge. If it’s AI video, it’s also huge, because it means proof is harder and synthetic footage is cheap.

We’re heading towards an era where “show me a video” is no longer evidence, and that will change everything from product demos to news.

GPT-5.3 rumours gather pace, but nobody has receipts yet

The “Garlic” rumours are back, with talk of a Feb 26 release and a meaningful jump on general benchmarks, plus stronger video and audio. Dan McAteer posted the bolder claim, while 🍓🍓🍓 added a more grounded “heard from separate sources” note and a hint that coding gains might be left to Codex.

This is the familiar pattern: excitement, a few screenshots, and a week of people trying to read the tea leaves. Until there’s an official post and people can test it, treat it as mood rather than fact.