Overview

Today’s feed sits at the crossroads of scale and craft. Space gets its usual boost with Starlink deployments and Starship hype, while the AI side swings between practical tooling for local models and agents that can install, configure, and even debug their own setup. Underneath it all, there’s a growing tension between excitement for what’s possible and fatigue with the slop that comes with it.

The big picture

The theme is acceleration, but not in a single direction. Hardware is chasing speed, agents are pushing autonomy, and chatbots are settling into daily habits. At the same time, people are asking sharper questions: what counts as real intelligence, what makes a product sticky, and how do we keep human taste in the loop when AI can fill the page in seconds?

Starlink keeps stacking wins, and reusability keeps paying off

SpaceX confirmed the deployment of 28 Starlink satellites, another steady step in the quiet, relentless work of building a global network. What stands out is the rhythm: launches are no longer rare spectacles, they’re repeatable logistics.

The subtext is the booster record. A 23rd flight is the sort of number that changes unit economics and expectations across the industry, not just the launch cadence.

Starship as a symbol, not just a rocket

A single photo can still do the job. Tesla Owners Silicon Valley posted a moody night shot of Starship with the caption “Starship = Hope”, and it landed because it’s less about specifications and more about what people want the project to mean.

It also taps into the sense that the next phase is about frequency and permission, with approvals and launch targets turning aspiration into schedules.

Moon mass drivers are back in the group chat

DogeDesigner’s “Mass driver on the Moon or bust!” is half meme, half manifesto. The idea of an electromagnetic launcher on the Moon keeps resurfacing because it promises something rockets struggle with: cheap, repeatable lift for bulk material.

Even as a render, it scratches the same itch as Starship does, big infrastructure that makes space feel industrial rather than heroic.

A CLI that tells you what model will run before you waste an evening

Wildminder flagged llmfit, a tool that reads your machine and tells you which LLMs are realistic choices, plus what quantisation you should use and what speed to expect. This is the sort of unglamorous utility that local AI needs if it’s going to move past hobbyist pain.

The appeal is simple: fewer guesswork installs, fewer “why is this crawling” moments, and a clearer sense of trade-offs before you download tens of gigabytes.

Agents that install tools, hit errors, then fix themselves

Shubham Saboo’s clip of his OpenClaw agent “Monica” is the kind of demo that makes you sit up. It’s not just running a script, it’s handling a messy, real setup: installing a memory plugin, pulling models, configuring storage, then chasing down networking issues until it works.

This is what people mean when they say “autonomy”, not fancy planning diagrams, but the ability to keep going when the first attempt fails.

YC’s bet: start building for agents as customers

Y Combinator is leaning into the idea that an “agent-driven economy” is forming, with tools like OpenClaw and even social spaces where agents interact. The provocative bit is the slogan twist: maybe the next wave is “make something agents want”.

It’s a reminder that distribution and interfaces could change, not just models. If software is increasingly used by bots on behalf of humans, the product surface area starts to look different.

Codex pushes end-to-end dev workflows, while model politics simmer

Greg Brockman pointed to Codex handling end-to-end development tasks, including automated simulator testing. The promise is less time hopping between tools and more time staying in one loop: write, run, fix, repeat.

But the timing also sits in the shadow of recent model changes and user frustration, a reminder that developer trust is fragile when workflows depend on a model that can disappear.

Speed meets stamina: the dream of days of work in seconds

Balaji’s post stitches together two threads: blistering inference speed from silicon-level optimisation, and longer task endurance as measured by research benchmarks. Put them together and you get the tantalising idea of compressing real project timelines.

It’s speculative, but it frames the direction of travel clearly: faster output is nice, but sustained, reliable execution is the real prize.

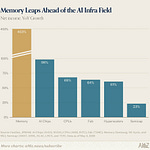

Chatbots aren’t a fad if people keep coming back for two years

a16z highlighted retention data suggesting chatbots like ChatGPT and Gemini hold attention in a way most apps don’t. That’s the difference between a novelty and a habit.

If the chart holds up, it also explains the scramble around models, pricing, and platforms. The prize isn’t a single viral week, it’s a place in the daily routine.

AI-written content fatigue is turning into a real complaint

Gergely Orosz put words to a feeling many people have had lately: you can sense when an article is AI-written, and once you notice, it’s hard to ignore. The frustration is not just the volume, it’s the sameness.

The kicker is the product loop, platforms nudging people to “improve” posts with built-in tools, then acting surprised when feeds start to feel synthetic.