Overview

Today’s feed swung between big, slow-moving forces and fast, sharp tech moments: shrinking birth rates, families reorganising around housing costs, and a fresh round of anxiety about who controls the next wave of AI. In the mix were practical examples of people building personal tools with models, awkward questions about consent in crowdsourced mapping, and a reminder that geopolitics still has the power to pull focus.

The big picture

Two threads ran through almost everything: pressure on institutions (families, companies, states) and pressure on trust (who gets to collect data, who gets to build frontier models, and who gets to sell access to ‘intelligence’). The posts that landed hardest were the ones that made those pressures feel concrete, whether that was a fertility rate chart, a stadium of research posters, or a dog’s tumour response tied to an AI-designed vaccine.

Demographics as destiny, and the question of whether anyone can reverse it

Chamath Palihapitiya revived his old warning on demographic decline with a blunt update: the US fertility rate is now 1.6, and more than 110 countries sit below replacement. His take is that the drivers are mostly voluntary and reinforcing, from delayed parenthood to cost and culture, which makes neat policy fixes look optimistic.

It is a grim topic, but it is also a useful one to keep in view. If fewer people is the baseline for the coming decades, it changes how we think about growth, welfare systems, labour, and even what ‘progress’ looks like.

Multigenerational living stops being a ‘phase’ and starts looking like a plan

Matt Walsh posted a personal reversal: he no longer thinks pushing kids out at 18 is the goal, and he would rather see adult children stay at home until marriage, and even beyond. The replies turned it into something bigger than parenting philosophy, touching a nerve around housing costs, childcare, and what people can realistically afford.

Underneath the culture-war framing, there is a plain economic point. When rent and mortgages bite, families reorganise, and what used to be called dependence starts to look like resilience.

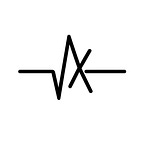

The ‘intelligence meter’ idea keeps coming back, for better or worse

A clip-driven line attributed to Sam Altman, that intelligence will be sold like electricity or water, made the rounds again, this time with a bleak caption. Even if you ignore the personalities, the underlying concept is sticking: pay-per-use cognition as infrastructure.

If that becomes the normal mental model, the fight quickly moves from “can you access AI?” to “what happens when the best thinking is priced like a utility?”, and who gets the cheaper tariff.

Who gets to build runaway AI, and why it might be only three labs

Ethan Mollick argued that if recursive self-improvement arrives, it is likely to come from Google, OpenAI, or Anthropic, not from the chasing pack. His reasoning is simple: parity is hard to maintain, and the gap between frontier labs and everyone else seems to persist.

It is a tidy post that points to a messy outcome: concentration. If the next jump depends on rare talent, compute, data, and tight feedback loops, the list of plausible winners gets uncomfortably short.

Chollet’s counterpoint: the next leap is not another architecture tweak

François Chollet pushed back on the idea that a new deep learning architecture will unlock a step-change. His claim is that model tweaks buy small gains, but the core limitations around generalisation and reasoning remain, which suggests something lower-level and more fundamental has to give.

This is the familiar ARC-AGI worldview: scaling is not the same as understanding. Whether you agree or not, it is a useful check on the “next model will fix it” habit.

Niantic’s 30 billion images, and the uncomfortable consent gap

Bilawal Sidhu tried to calm the immediate panic around Niantic’s crowdsourced imagery, framing it as scans of public landmarks rather than neighbourhood surveillance. Still, the wider point landed: games can subsidise reality capture at a scale and price traditional mapping cannot match.

The ethical snag is not whether a park statue is private, it is whether players understood the afterlife of their contributions, especially when the output becomes commercial infrastructure for robotics and AR.

Personal ‘vibe-coded’ health dashboards are filling the gaps left by platforms

Alex Cohen shared a homebuilt health app stitched together with Claude: Oura sync, bloodwork uploads, scraped nutrition logs, and OCR on workout screenshots, stored locally. It reads like a small act of rebellion against fragmented dashboards and subscriptions, and also a glimpse of where personal software is heading.

The interesting part is not the tech stack, it is the behaviour. People are starting to treat AI as their integration layer, and they are doing it because nobody else is doing it for them in a way they trust.

Claude gets credentialed, and AI careers start to look more formal

Anthropic’s Claude Certified Architect exam is a sign that the market is trying to standardise what ‘good’ looks like in production AI work. It is also a marker of maturity: once there are exams, there are job ladders, course sellers, and hiring checklists.

There is a risk of credential theatre, of course, but it also helps explain why so many people are reorganising their time around model-specific skills.

Jensen Huang’s anti-1-on-1 stance, and the trade-off between scale and safety

A resurfaced clip had Jensen Huang saying he discourages 1-on-1s, preferring public information flow and group learning, even with an unusually wide span of control. In his framing, transparency scales, and private feedback loops do not.

It is a polarising idea for a reason. What works in a high-trust culture can turn nasty fast elsewhere, but it is also hard to ignore when the organisation in question keeps shipping.

Taiwan reports a spike in Chinese aircraft activity near the island

@unusual_whales amplified a POLITICO report that Taiwan detected 26 Chinese military aircraft near the island. The post’s tone leaned towards alarm, but the underlying fact pattern is part of a longer rhythm of pressure and response in the Strait.

Even when it is ‘routine’, it is still the kind of routine that markets, supply chains, and defence planners cannot afford to treat casually.

A DIY cancer vaccine story, and how quickly the AI credit fights arrive

X Freeze pointed out a detail being missed in the headlines: the entrepreneur behind an mRNA vaccine attempt for his dog says Grok produced the final construct, despite much of the coverage crediting ChatGPT. Beyond the platform rivalry, the story itself is startling, an individual trying to compress an R&D pipeline into a personal project.

It raises hard questions in the same breath as hope: validation, safety, oversight, and what happens when “personalised medicine” becomes accessible to anyone with enough money and confidence.