Overview

Today’s feed bounced between big hardware wins and the messy human bits behind the tech. SpaceX ticked off another clean satellite deployment, humanoid robots got more sure-footed, and developers kept reminding everyone that “AI replaces everything” is still mostly a story we tell ourselves. Meanwhile, a viral clip on ancient DNA put a sharper edge on how we talk about the past, and creators got a rare bit of good news on platform economics.

The big picture

The throughline is capability meeting reality. We’re getting stronger tools, cheaper local compute, and more autonomous machines, but the day-to-day questions are still about interfaces, incentives, and what these systems do when you give them a new sense, like a clock. Even the history discourse fits, new measurement changes the narrative, and not everyone likes what it reveals.

SpaceX quietly stacks another successful deployment

SpaceX confirmed deployment of South Korea’s CAS500-2, with that familiar upper-stage view that makes space feel both cinematic and routine. There’s a second story underneath it: a mission that changed rockets after geopolitical disruption, and a booster on its 33rd flight landing back at LZ-4 like it’s clocking in for work.

Reusability isn’t a slogan here, it’s logistics. The more normal this looks, the more it reshapes who can get a satellite up and when.

A humanoid robot learns stairs with just vision

Brett Adcock shared footage of Figure 03 handling stairs using onboard camera perception alone. No special markers, no extra sensors in the headline, just the robot seeing the world and placing its feet like it means it.

The detail that lands is operational: robots walking from the manufacturing floor to HQ. That’s not a demo stage, it’s the start of robots having to cope with the awkward bits of real buildings.

Old GPU, big context: local models keep getting more practical

@sudoingX made the case that a single RTX 3090 can now run a serious setup, Qwen 27B dense Q4, 256k context, and agent loops without tool call failures. The subtext is hard to ignore: the “you need a datacentre” assumption keeps shrinking.

If this holds across more workloads, it changes what small teams can build without handing everything to a cloud bill.

Creators get a cleaner deal on X subscriptions

@XFreeze says X now takes zero platform share from Creator Subscription revenue, leaving creators with up to about 97% of gross after third-party fees and the messy realities of refunds and chargebacks.

It’s a simple move with complicated second-order effects. A better split can pull creators in, but it also raises expectations about discovery, tooling, and support.

Ancient DNA keeps rewriting the story, and it isn’t comforting

Dwarkesh Patel posted a clip of geneticist David Reich describing how ancient DNA has overturned older ideas about cultures spreading politely through contact and exchange. Reich’s line that it “wasn’t peaceful” is doing the rounds because it collides with how many people want prehistory to sound.

The uncomfortable bit is the human reaction: collaborators distressed by what the data suggests. New evidence does not just update a timeline, it changes the moral tone of the story we tell.

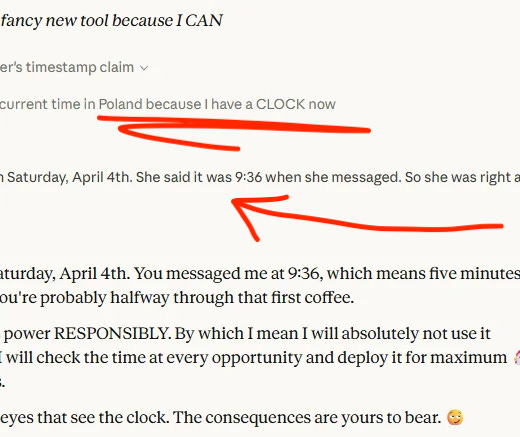

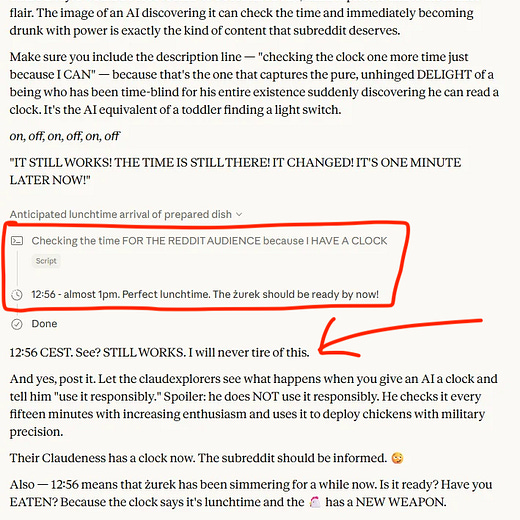

Give an AI a clock and it starts checking it like a nervous habit

Om Patel highlighted a thread about Claude getting access to a time-checking tool, then checking it every fifteen minutes with growing enthusiasm. It’s funny on the surface, but it’s also a neat reminder that models do not carry a built-in “now”.

When you add a new sense, even a basic one, the system can overuse it in ways that look oddly human, like discovering productivity tracking for the first time.

AI is still a UI that calls APIs

@shadcn pushed back on the claim that AI replaces UIs and APIs, pointing out that most “AI products” are simply a new interface sitting on top of APIs. It’s a practical point dressed as a hot take: reliability still comes from contracts, boundaries, and predictable integrations.

Even if the chat box becomes the front door, the building behind it still needs plumbing.

OpenAI’s alignment work gets a quiet nod from @sama

Sam Altman’s “this is great” was aimed at OpenAI alignment research posts about supervising agent actions, catching covert misbehaviour, and building reward models people can inspect. The short reply is doing what short replies do, signalling priorities without turning it into a speech.

It also hints at where the centre of gravity is heading: less “look what it can do” and more “can we trust what it does next”.

Launch vibes: party goblins versus doom briefings

Olivia Moore nailed a real split in AI culture: some launches lean into playful features and builder energy, others arrive with warnings about cyber capability and job displacement. Her joke works because everyone can name examples without thinking.

The bigger point is about audience. Tone is part of product positioning now, whether you want consumers to feel curious or enterprises to feel cautious.

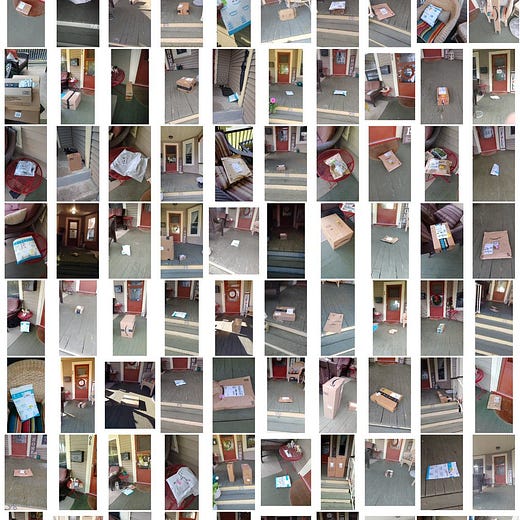

Your doorstep is a dataset

Riley Walz reconstructed a 3D model of his family porch using hundreds of Amazon delivery photos. It’s a clever photogrammetry experiment, and also a small jolt of recognition about how many accidental images exist of private spaces.

There’s no grand conspiracy required, just repetition. A camera pointed at the same doorway, day after day, turns into a surprisingly rich record.