Overview

Today’s feed had two big moods: builders showing off what modern coding models can do with old binaries, and everyone else squinting at the economics and ethics underneath it all. From AI resurrecting DOS and NES classics, to six-agent design swarms, to Claude Code getting more “agentic” day by day, the tools are getting sharper. Meanwhile, the weirdest headline of the day was still the neurons-on-a-chip Doom demo, which somehow feels both like a science milestone and a plot device.

The big picture

AI is creeping from “help me write a function” into “take this entire system and rebuild it”, whether that system is a legacy game executable, a full codebase, or a recurring workflow that runs on a schedule. At the same time, people are starting to argue about what’s scarce now: GPUs, electricity, data privacy, and even the right pricing model. The vibe is excitement, mixed with a nagging sense that we are normalising some strange stuff at speed.

AI brings back SkyRoads from a DOS EXE

Ammaar Reshi’s demo is the kind of thing that makes game preservation feel suddenly practical. He points Codex 5.4 at a 1990s DOS game with no source code and it just gets on with it: unpacking assets, disassembling the executable, rebuilding the renderer, and producing a Rust version you can actually run.

The fun part is the nostalgia, but the bigger point is capability. If models can repeatedly do this sort of reverse engineering, “abandonware” stops being a dead end and starts looking like a backlog.

https://x.com/ammaar/status/2030392563534893381

Three prompts to an AI-controlled Mario emulator

Pietro Schirano shows how quickly the floor is dropping out of tasks that used to be specialist work. In his clip, GPT-5.4 hacks a Super Mario Bros. ROM, exposes RAM events, then spins up a JavaScript emulator that can accept browser requests, turning the characters into AI-controlled agents.

It’s funny on the surface, but it also hints at how fast binary poking, modding, and security-adjacent tinkering are becoming “normal developer behaviour”.

https://x.com/skirano/status/2030405113286680852

Six design agents riffing on an app in real time

Peter Yang previews an episode with Pencil CEO Tom Krcha, and the quick video does the job: “swarm mode” is six design agents working in parallel, pushing UI layouts around like a tiny, tireless design team.

The detail that sticks is the under-the-hood coordination via JSON. It’s less magic, more structured constraints, which is probably why it looks usable rather than chaotic.

https://x.com/petergyang/status/2030305044806225950

Claude Code grows up: scheduler loops inside the tool

Ado’s post is short, but it signals a real change in how people will use Claude Code. A built-in scheduler, via /loop [interval] <prompt>, means you can run recurring tasks without glue scripts and brittle cron setups.

This is how “chat” quietly turns into “ops”. Once the tool can run jobs on a timer, it starts to feel like a lightweight agent you can rely on, for better or worse.

https://x.com/adocomplete/status/2030382291479085073

Claude Code Skill Creator adds built-in tests

0xMarioNawfal flags an update to Claude Code’s Skill Creator: it now generates tests to measure trigger rates, so you can iterate on a skill with feedback rather than vibes.

If you’ve ever tried to make an agent behave consistently, this is the missing piece. Not the “cool demo” part, the boring loop of “did it fire when it should, and can we prove it?”.

https://x.com/RoundtableSpace/status/2030331392320532781

OpenCode desktop leans into codebase understanding

Kit Langton demos a feature that’s quietly becoming table stakes: point the tool at a repo, ask what it does, and get a coherent summary after it scans files and runs shell commands. In the clip it identifies a Node app using Canvas and SQLite and explains the shape of the project.

It’s less about “chat with your code” and more about not wasting your first day on a new codebase clicking around tabs.

https://x.com/kitlangton/status/2030351557988946081

“I gave Claude private company data just to centre a div”

spidey lands the meme of the day: the tiny problem that somehow convinces you to paste in the entire world. It’s a joke, but it also captures a real bad habit forming in teams, where convenience wins and “what data did we just upload?” becomes an afterthought.

If AI use is becoming default, the baseline for privacy literacy is going to need to rise fast.

https://x.com/lochan_twt/status/2030381471962460556

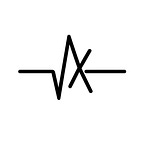

200,000 human neurons on a chip learn Doom

CG’s thread is the post people kept quote-tweeting, because it’s hard to read without pausing. Cortical Labs put lab-grown human neurons on silicon, trained them to play Doom in a week, and sells the system for $35k, with racks consuming around a kilowatt.

The energy comparison is what makes it stick: biology doing adaptive work at power levels that make today’s data centres look absurd. The comments, though, are where the unease shows up, because once you say “wetware” out loud, you’re in ethics territory whether you like it or not.

https://x.com/cgtwts/status/2030372644101701720

The real bottleneck: getting your hands on GPUs

Dustin pulls a quote from Larry Ellison that sums up the new hierarchy nicely: billionaires begging Jensen Huang for more GPUs. Money isn’t the constraint when supply is capped by manufacturing, energy, and time.

It’s a reminder that “AI leadership” is starting to look like logistics. If you cannot secure compute, you cannot compete, no matter how big your chequebook is.

https://x.com/r0ck3t23/status/2030444400308842520

Are AI coding tools being sold below cost on purpose?

Karan’s post throws cold water on the happy “$200/month for superpowers” story. The claim is that heavy usage can cost thousands in compute, meaning some plans are subsidised heavily to win mindshare, and maybe dependency, before prices climb.

Even if the exact numbers get argued over, the broader question is fair: what happens when the subsidy era ends and teams have built their workflows around a tool they cannot afford at “true cost”?